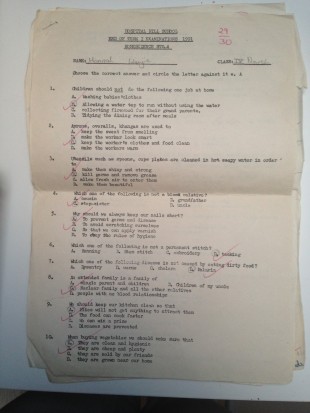

My primary school had some quite strong incentives to drive up performance. Every end of term, our teachers would test us and rank my classmates and I against each other. It was the extremes that mattered – top and bottom. Wherever I came in the ranks, I was mostly just thankful that I wasn't the kid at the bottom - it was certainly very motivating!

Now, many years later, I'd like to think that I'm motivated by a range of incentives than ranking. I have personal goals and don't have to "win" or "be praised". But the ranking did make a difference to my performance back then. This week, a report will be published that – to a degree – will try to act as a motivator around the question "Who is best at delivering and using development cooperation?"

There are three notable points about this report. First, its authors are statisticians and experts from UNDP and OECD who are also members of the Support Team for the Global Partnership for Effective Development Cooperation.

Second, lots countries and organisations have volunteered to be included in the report. To be exact, 46 countries that receive development cooperation - from other countries, multilateral organisations and other organisations – submitted data to inform the report. And those countries and organisations to which the data related – including DFID – checked the data to make sure it's accurate. No-one asked for their data to be withheld. So, in principle, it would seem lots of development actors are happy to be ranked on their performance.

Third, although the report will collate data and analysis relating to 10 different indicators, it will only actually rank countries on 1 indicator – an indicator of transparency of development cooperation. The reason for this is that ranking is political.

Most ranking that we read about in papers or see on TV – whether it's about how open budgets are, perceptions of corruption or international technology policy – is usually done by independent organisations. But, as mentioned earlier, the authors of this report work in UNDP and OECD. They are world-class experts, which means that the data and information in the report is credible. But UNDP and OECD are organisations that are not independent of the countries and organisations submitting the data. And this is why ranking is too political for them. As children, if you had asked our parents or someone in our class to rank us, they would have had difficulty being objective and would worry that they would create trouble for themselves. Even simply agreeing the criteria to use for ranking might be very difficult. That's why, as a child, it was the teachers that – objectively – ranked me and my fellow class-mates.

The key reason why transparency is able to be rank in the report is that there is already an independently published report on transparency that has become well-known and well-respected. This independent report has ensured the criteria on transparency are now broadly well understood. But for the other 9 indicators and issues raised in this week's forthcoming report, no such credible, independent rankings exist yet.

The question is, even if credible, will this week's report motivate and drive up performance like my primary school did me? Without ranking it might not. But then again, the international development community might not need this kind of incentive to make improvements. Here in the UK for example, a huge incentive to improve our cooperation with other countries has come from pressure from UK taxpayers to demonstrate results and value for money. In countries that receive cooperation, citizens pressure leaders to have a vision for development and use cooperation to deliver that vision. These citizens often have the power to change their votes or stop paying taxes if cooperation is failing.

But there is still a role for ranking – especially where taxpayers or citizens can't actually voice their concerns openly. And if the data is robust and the indicators and criteria are really relevant and well understood, ranking could be very useful for motivating performance.

This week's report will be a great step in the right direction for driving improvements around how we all work together to deliver development. But if we want more ranking to be introduced for policies other than transparency, we will need many more independent reports on those other policies to be published in the coming years.

We need some objective teachers in the development community to start some new tests.

Keep in touch. Sign up for email updates from this blog, or follow Hannah on Twitter.

4 comments

Comment by Ian Thorpe posted on

This is a really great initiative. Rankings have long been used as an advocacy tool to compare countries on development issues- why not aid organizations too. A few thoughts on the good and bad of rankings:

The good:

Rankings and published comparisons can be a good source of information for donors to choose between organizations (e.g. for DFID in choosing the best multilateral channels for aid, or for individuals to choose between different charities/NGOs). They might also be used by partner governments in deciding who best to work with.

Rankings can be an impetus within organizations for improved performance through professional pride. I recall an organization I know being surprised it rated much worse than another similar organization on transparency and quickly drafting a plan to reach and overtake it.

The bad:

Rankings inevitably lead to lots of debates about the quality of the data, the relevance of the indicators etc. both as a legitimate critique and a way of deflecting attention from a poor rating. Finding criteria to judge "best" in development work would be rather daunting due to the wide range of views about what aid and development are - and difficulties in objectively measuring the contribution of aid to development. Good luck in finding criteria that everyone agrees to!

IF the rankings are being used as in input to funding or partnering decisions then there is an incentive for the better performers not to share what they know to help the poorer performers to improve just so they can maintain their leading position. This might be good for the better performing organizations and for donors - but is probably not good for development as a whole.

Comment by Hannah posted on

Dear Ian

Thanks so much for reading and for your response. These are all very valid points and we should bear them in mind as we proceed. In addition to the points you raise, we also need to recognise that, like for children, there can be very real barriers to organisations and countries moving up ranks - even if they want to. So it is important to always look at qualitative information as well as the ranks to understand what is really going on.

I would be interested in your and othe reader's views as to whether there are development effectiveness issues aside from transparency that you think would be worth looking at ranking or scoring sooner than others?

Hannah

Comment by Anonymous posted on

Odd that DFID should start complaining in this way. They used to publish the results of their Paris Declaration indicators in reports to Parliament, and then stopped doing so... a step backwards for transparency, no?

As for the independence of international organisations: they are supposed to be "independent in character" - but sadly they're prone to agenda capture by individual members (the UK is quite good at this, with its parallel aid reviews and numerous "initiatives").

Comment by Przemek posted on

@Ian Thorpe. Im totally agree with you. This is a really great initiative. Thanks for your response and feel welcome to Poland by http://www.escape2poland.co.uk